Regular indexing of the content plays an important role in optimizing your web site for search engines. The process of indexing or data collection is done by automated programs called bots. These programs constantly crawl the web, pick up new and updated information and send it to another program which puts everything in a database.

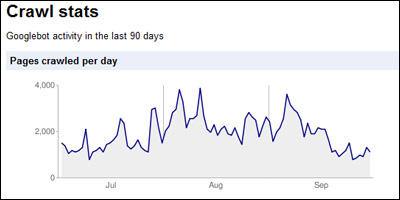

Though most web developers wish and pray that search engines index new content of their web sites in the next hour it generally doesn’t happen. Many fret over this issue and rightly so. However, there are elegant ways through which you can instruct the search engine bot to visit your web site and pick up new information. The quickest is to resubmit a updated XML sitemap via the Google Webmaster tools site, for instance. The Webmaster tools also display the crawl statistics by the Googlebot – refer image below.

Web site indexing by Yahoo, MSN and Google bots

Botvisit offers a nice service through which you can come to know the date when your web site was indexed by search engine bots of Yahoo, MSN and Google. There are two ways to check this on Botvisit. You can either go to the site every-now-and-then, enter your web site URL and get the information. Or you can download and paste the code for each of the three search engines on any web page (the web site homepage maybe?). The code will render a small image with the date on which your web site was indexed by the search engine. Check the ones below.